Using Multi-LLM Systems for Investigations and Ediscovery: Smarter and Way More Cost-Effective

By John Tredennick, Dr. William Webber and Lydia Zhigmitova

The legal industry is witnessing a revolution with the adoption of AI for its core functions. There are hundreds, if not thousands, of new software applications using generative AI. Most use OpenAI’s latest large language model (LLM), GPT-4, and are seemingly locked into one vendor and one model.

We chose a different approach for DiscoveryPartner, our GenAI ediscovery platform. It can access GenAI products from multiple vendors for different tasks. Why do this? Because it affords the flexibility to use the best, most cost-effective LLM for each task required. And it avoids keeping all our eggs in one AI basket.

As you will quickly see, it’s a much smarter way to go.

Why Build a Multi-LLM Framework?

We chose to build a multi-LLM framework because we have found that lower-priced LLM models can handle certain tasks as well as the higher-priced version and often do them much more quickly.

A multi-LLM framework provides our clients with the flexibility to use different LLM models for different purposes. And it also allows them to use faster and cheaper models for routine work, while reserving the most sophisticated (and expensive) models for synthesis, analysis and reports. This approach is faster, more flexible and more cost-effective than relying on a single model from a single vendor.

How Does a Multi-LLM System Work?

DiscoveryPartner uses different LLM providers for document analysis, including OpenAI, Microsoft and Anthropic. Supporting two key functions for document analysis, summarization and synthesis, DiscoveryPartner illustrates a new paradigm in legal investigations.

Document Summarization

Once relevant documents are identified, the user can direct the system to summarize based on a topic description. We typically use the LLM first to summarize documents based on a topic request. We then submit multiple summaries to the LLM for analysis rather than the entire document. This lets us submit more summaries to the LLM for analysis than would be possible if we submitted entire documents.

Specifically, you tell the LLM about the nature of your investigation and the kind of information you want to review. You might also ask it to identify people and dates mentioned in the document.

DiscoveryPartner offers these options for document summarization:

We have found that Claude Instant (100 K) is quick, efficient and provides document summary content that is roughly equivalent to the output from GPT-4. In fact, Claude Instant is faster at producing summaries and costs a fraction of what you pay for GPT-4 to provide the same function. For that reason we recommend using Claude Instant, particularly when you are summarizing 300 or more documents.

There is a second reason we like Claude Instant for summarization work. It has a much larger context window (100K tokens vs. 4K or 16K with GPT-4). That makes it perfect for analyzing larger documents that won’t fit in a smaller context window.

Synthesis and Reporting

The second step is to synthesize and report on information across the documents selected for summarization. This typically involves a high-level analysis of the documents tailored to your information need and more complex reporting requests.

For synthesizing and reporting across multiple documents, we recommend top-of-the-line LLMs like GPT-4 and Claude 2, which excel in providing insightful reports on legal issues, positions, people involved and timelines. Both cost more than their alternative versions and are a bit slower at responding to requests, but the higher quality output makes it worth putting up with both of these small issues.

How Much Can A Multi-LLM Save Us In Processing Costs?

Many of us started using ChatGPT when it was free. Some of us moved to the $20 per month version to secure faster responses and access to later models but daily volumes were still limited. These licenses aren’t suitable for commercial purposes and are not our focus here.

For legal systems, we need to move to a commercial license and access GPT, the underlying engine for ChatGPT, through an API (application programming interface).

Before we get to specific prices for different models, let’s talk a bit about tokens. They are central to the analysis of which models are best suited for different purposes.

About Tokens

Tokens are the basic units of text or code that an LLM uses to process and generate language. Tokens can be punctuation, words, subwords or other segments of text or code. A token count of 1,000 typically equates to about 750 English language words.

How many tokens in a prompt? This obviously depends on the length of information you send to the LLM. For our purposes, however, prompt lengths can be long because we typically send the text of ediscovery documents (or summaries of that text) to the LLM along with our request or question.

Why send the text? Because, for ediscovery at least, we typically ask the LLM to base its answer on the text of one or more discovery documents (email, documents, transcripts, etc.). As a result, the volume of text transmitted to the LLM can be much larger than the response.

For pricing purposes, we have found that the average volume of prompts is roughly ten times larger than the average volume of responses. Put another way, we typically send 1,000 tokens to the system for every 100 tokens received in response.

Why does this matter? Because most vendors charge one price for the volume of tokens submitted in the prompt (input) and another, larger price for the volume of tokens returned by the LLM in its answer (output).

Commercial Pricing

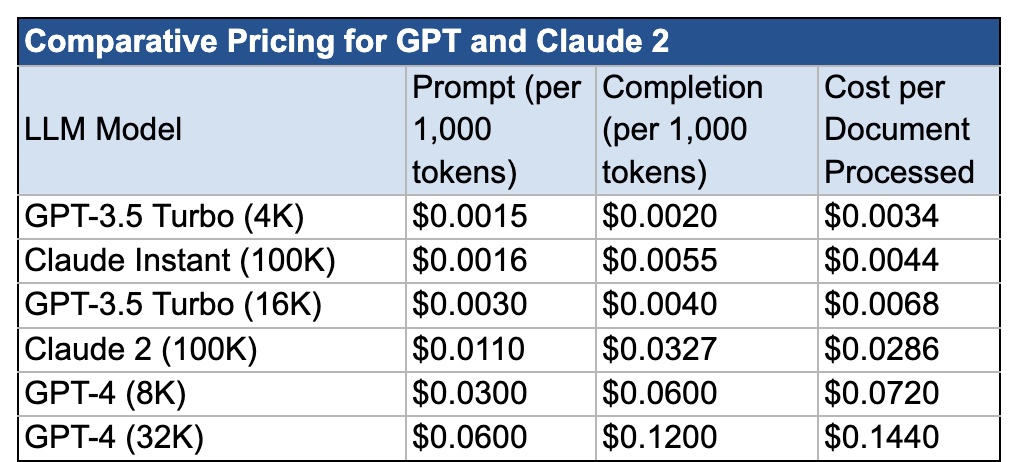

Here is a chart showing current pricing for two different LLMs: GPT (from OpenAI and Microsoft) and Claude 2 (from Anthropic). Both GPT and Claude come in different models, with pricing matching their capabilities. Microsoft and OpenAI offer the same prices so they are grouped together.

The references in parentheses represent the size of the context window for each model. In essence, the context window limits how large the prompt can be for each request. The higher the prompt limit, the better, but costs increase as well. You can read more about context windows here: Are LLMs Like GPT Secure?

Prices per 1,000 tokens are relatively small. To put things in perspective, we estimated the cost to send and return information for a single document that is 2,000 tokens in length (about 1,500 words). This is a representative average document length for ediscovery, though it will vary between cases and collections.

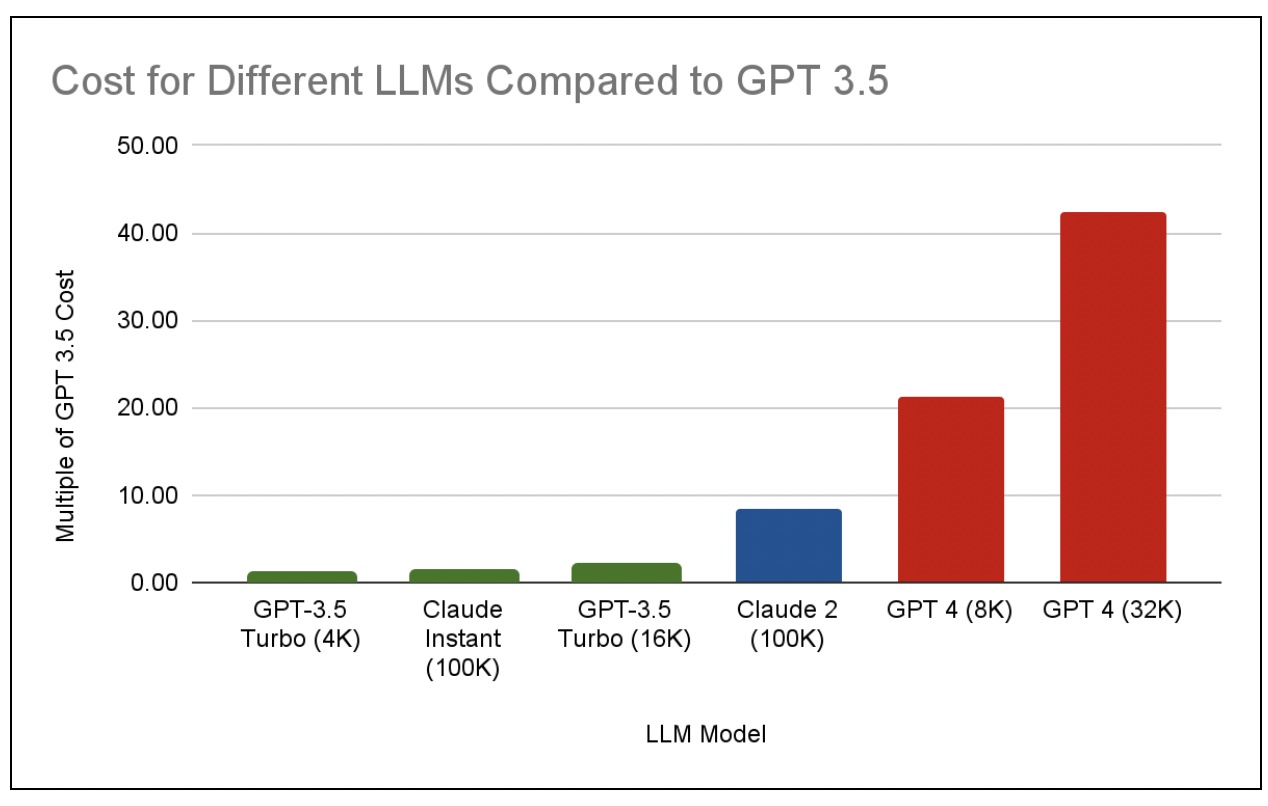

You can quickly see that the cost to use GPT-4 is much greater than for lesser models like GPT 3.5. This chart shows relative pricing as a multiple of the cheapest model: GPT 3.5 (4K):

GPT-4 (32K) costs over 40 times more than GPT 3.5. GPT-4 (8K) costs over 20 times more than GPT 3.5. Do we need the power of GPT-4 for every task we might run? That is the question we will address in the next section.

Getting Smart About How We Use LLMs

As we pointed out, using different LLMs for different jobs affords the flexibility to use the best, most cost-effective LLM for each task required. Pricing can make a difference when two tools can do the same job equally well. If one of the LLMs costs fifty times more than the other, you might want to pay attention.

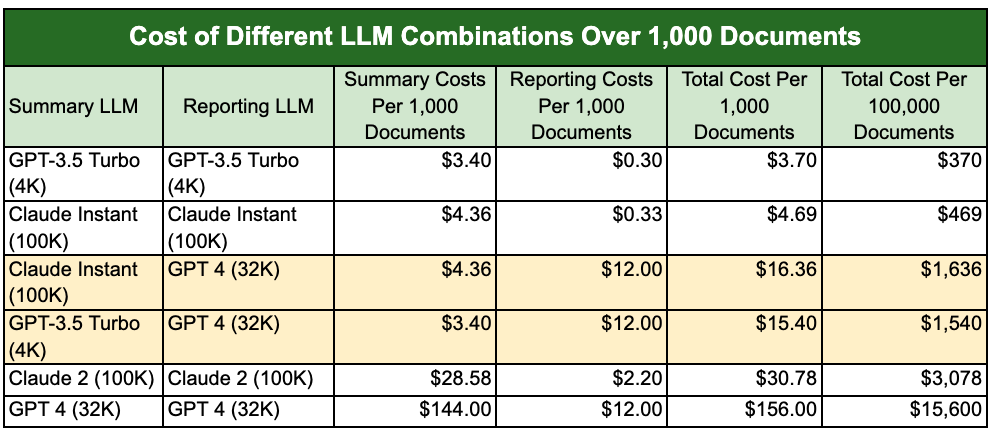

With a multi-LLM framework, our clients can mix and match LLMs and individual models to get the most cost-effective results. Here is a chart showing how this approach can save on LLM costs using different combinations of LLMs and models.

You can quickly see how the costs vary between different combinations of LLMs and LLM models. In this case, we have highlighted two combinations that we think represent smart choices. We urge clients to consider GPT 3.5 or Claude Instant for summary work. Why? Because they are much faster than their more powerful siblings and less costly. And, the summaries don’t show the kind of improvement that justify the added costs in most cases. Where a client wants the additional horsepower provided by GPT-4 or Claude 2, it is easy to change the setting.

Why consider Claude Instant or Claude 2 in this mix? One simple reason is the larger context window. Claude 2 offers a three-times larger context window which can be important for large documents. As an example, we can fit most transcripts into Claude Instant or Claude 2. They would have to be broken up to fit GPT’s smaller context window. This can make the difference in many cases. (We are thinking about checking text sizes before processing to automatically send larger documents to Claude 2 even if we use GPT for their analysis.)

Ultimately, the smart play depends on your matter and your documents. The one thing we can say for sure is that having a multi-LLM framework affords the flexibility to use the best, most cost-effective LLM for each task required. That is why we chose a different path than other vendors.

Adding New LLMs

There is one more important advantage to our approach. As new and even more powerful LLMs hit the market, we can integrate them into our platform. We connect to commercial versions of LLMs like GPT and Claude through an API, which provides the flexibility we need to connect and take advantage of different LLMs.

Hardly a week goes by without an announcement of a new “more powerful” large language model that will knock the current AI leaders off their pedestals. As these new LLMs continue to emerge, our adaptable framework ensures that we remain at the forefront of technology, offering our clients an agile, powerful, and economically wise tool that elevates their practice in an increasingly competitive and technologically-driven environment.

About the Authors

John Tredennick (JT@Merlin.Tech) is the CEO and founder of Merlin Search Technologies, a software company leveraging generative AI and cloud technologies to make investigation and discovery workflow faster, easier and less expensive. Prior to founding Merlin, Tredennick had a distinguished career as a trial lawyer and litigation partner at a national law firm.

With his expertise in legal technology, he founded Catalyst in 2000, an international ediscovery technology company that was acquired in 2019 by a large public company. Tredennick regularly speaks and writes on legal technology and AI topics, and has authored eight books and dozens of articles. He has also served as Chair of the ABA’s Law Practice Management Section.

Dr. William Webber (wwebber@Merlin.Tech) is the Chief Data Scientist of Merlin Search Technologies. He completed his PhD in Measurement in Information Retrieval Evaluation at the University of Melbourne under Professors Alistair Moffat and Justin Zobel, and his post-doctoral research at the E-Discovery Lab of the University of Maryland under Professor Doug Oard.

With over 30 peer-reviewed scientific publications in the areas of information retrieval, statistical evaluation, and machine learning, he is a world expert in AI and statistical measurement for information retrieval and ediscovery. He has almost a decade of industry experience as a consulting data scientist to ediscovery software vendors, service providers, and law firms.

Lydia Zhigmitova (LZhigmitova@Merlin.Tech) is Senior Prompt Engineer at Merlin Search Technologies. She received her Masters in Philology from the Pushkin State Russian Language Institute, Moscow. Her thesis title was “Metaphors in Scientific Communication and Their Role in Teaching Russian as a Foreign Language” (Роль метафоры в научном лингвистическом тексте и её место на занятиях по РКИ).

Ms. Zhigmitova has a decade of experience in applied linguistics and digital content management. She works with Merlin data scientists and product engineers to develop prompt templates for our new GenAI platform, Discovery Partner. She also assists Merlin clients in best practices for effective prompts and helps develop Merlin’s AI-driven content generation and analysis toolkit. Ms. Zhigmitova is based in Ulaanbaatar, Mongolia.

About Merlin Search Technologies

Merlin is a pioneering cloud technology company leveraging generative AI and cloud technologies to re-engineer legal investigation and discovery workflows. Our next-generation platform integrates GenAI and machine learning to make the process faster, easier and less expensive. We’ve also introduced Cloud Utility Pricing, an innovative software hosting model that charges by the hour instead of by the month, saving clients substantial savings on discovery costs when they turn off their sites.

With over twenty years of experience, our team has built and hosted discovery platforms for many of the largest corporations and law firms in the world. Learn more at merlin.tech.

John Tredennick, CEO and founder of Merlin Search Technologies

JT@Merlin.Tech

Dr. William Webber, Merlin Chief Data Scientist

WWebber@Merlin.Tech

Transforming Discovery with GenAI

Take a look at our research and GenAI platform integration work on our GenAI page.

Subscribe

Get the latest news and insights delivered straight to your inbox!